一次生产环境上的docker启动失败原因分析

今夜原计划对 生产环境 上的 SDN 组件进行一次紧急扩容操作的,但业务基础环境中的 Docker-Engine 启动不起来了、原定计划也就无法继续进行了。

尽管查清了基础业务环境中的故障原因,但金主DD说今天先不干了,那就整理整理思路写篇流水账吧 。。。

现象如下:

1 ps -aux 查看,存在 docker 进程及对应的 看门狗 进程;

2 存在 /var/run/docker.sock 文件

3 查看 docker server 提示 不存在 /var/run/docker.sock 文件

4 在 Juju 部署平台上 提示 docker环境初始化成功、但部署 SDN 的容器化组件失败,提示代码为 400

5 ps -aux 查看 ,不存在 containerd进程

排查思路:

查看 docker-Engine 启动过程日志。

因为度厂产研封装了docker的二进制包,docker-Engine的启动过程日志不再控制台上显示,因此考虑杀死现有的 docker 机器看门狗进程,手工启动 dockerd 。

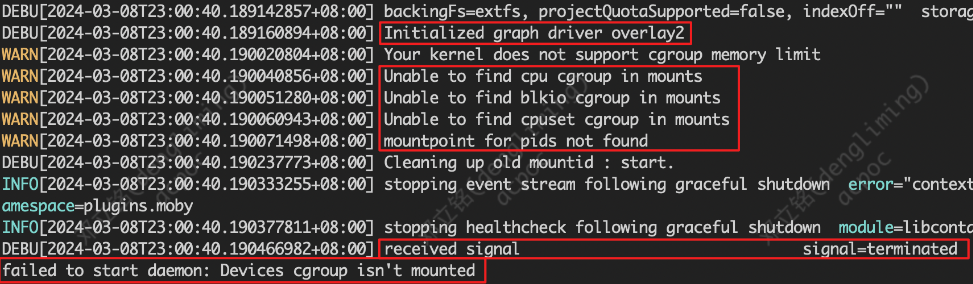

从日志中可知,containerd机器插件被正确启动了、存储驱overlay2被正确启动了、但控制组驱动cgroup没生效(cgroup 的虚拟设备未被挂载)

由此可知,正是由于控制组驱动cgroup没生效 ,所以OS终止了 dockerd 的进程启动。停止dockerd时,会先行把containerd进程 停止,这也就解释了为什么查询不到 containerd进程 。

解决办法:

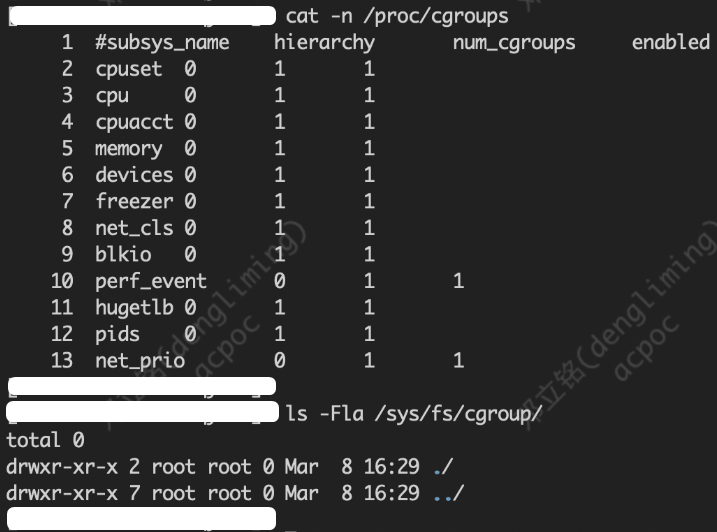

重新挂载cgroup 的虚拟设备 。

挂在后再次手工启动 dockerd ,发现 dockerd 被顺利启动。

此时,杀死手工启动的 dockerd ,改用 看门狗 启动并守护dockerd进程。

至此问题解决。

反思:

1 OS中还有 dockerd 的 进程信息,说明 dockerd 并不是一开始就不可启动。换而言之,就是 docker 的安装配置是正确的。那么在这种情况下为什么还会出现cgroup没生效 的现象呢?一种合理的解释是,度厂产研给 cgroup 挂载脚本设置了为安装之后挂载一次、并未写入配置文件进行永久挂载,而恰巧这批机器在安装OS之后因为某种原因发生了OS注销或者OS重启。这也可以理解,毕竟这是在客户付钱的项目上进行OpenStack项目部署,部署活动结束后这种 IAAS 层的OS更不可能无管控重启(即认为OS不会关机会重启,除非服务器硬件发生了损坏,解释也只需要新添加节点进入集群),因此dockerd在此期间能一直处于运行状态。

从这点上讲,大厂的产研设计能力,恐怕言过其实,严重脱离客户个性化定制的私有云的使用和管理实际,这个锅得项目立项时的需求调研和需求审核团队及交付技术架构与评审团队来背。

2 Juju-GUI上为什么会提示 docker初始化成功呢?一个可能的解释是,度厂的产研对社区版的Juju进行了拆分定制,对Linux中的 daemon 运行状态的判断方式不当,错把 ps 查询到的进程当做 daemon 启动就绪、给出了错误的判断。PID的存在于Linux中并不标志着 daemon 启动就绪,PID只意味着某个进程开始消耗OS的系统资源、并获得了CPU堆栈排程编号。Linux中 daemon 启动就绪 的判断标准应当是 APP启动日志中打印出业务数据、监听的unix-socket中产生了通讯记录。

从这个角度讲,大厂光环并不一定匹配综合型战术能力,这也说明了术业有专攻、能把一批在各自业务方向高精尖的人才整合起来在某一个具体的方向上拧成一股绳的领导者几遍在高手林立的大肠中也是奇缺的。

dockerd的启动过程日志如下:

WARN[2024-03-08T23:00:40.159413885+08:00] The "-g / --graph" flag is deprecated. Please use "--data-root" instead

WARN[2024-03-08T23:00:40.159679263+08:00] [!] DON'T BIND ON ANY IP ADDRESS WITHOUT setting --tlsverify IF YOU DON'T KNOW WHAT YOU'RE DOING [!]

DEBU[2024-03-08T23:00:40.159798003+08:00] Listener created for HTTP on tcp (127.0.0.1:2375)

WARN[2024-03-08T23:00:40.160597823+08:00] could not change group /var/run/docker.sock to docker: group docker not found

DEBU[2024-03-08T23:00:40.160680244+08:00] Listener created for HTTP on unix (/var/run/docker.sock)

DEBU[2024-03-08T23:00:40.160701444+08:00] Containerd not running, starting daemon managed containerd

INFO[2024-03-08T23:00:40.161261969+08:00] libcontainerd: started new containerd process pid=1694

INFO[2024-03-08T23:00:40.161315982+08:00] parsed scheme: "unix" module=grpc

INFO[2024-03-08T23:00:40.161330419+08:00] scheme "unix" not registered, fallback to default scheme module=grpc

INFO[2024-03-08T23:00:40.161352240+08:00] ccResolverWrapper: sending update to cc: {[{unix:///var/run/docker/containerd/containerd.sock 0 }] } module=grpc

INFO[2024-03-08T23:00:40.161368841+08:00] ClientConn switching balancer to "pick_first" module=grpc

INFO[2024-03-08T23:00:40.173767305+08:00] starting containerd revision=35bd7a5f69c13e1563af8a93431411cd9ecf5021 version=v1.2.12

DEBU[2024-03-08T23:00:40.173837840+08:00] changing OOM score to -999

INFO[2024-03-08T23:00:40.174036177+08:00] loading plugin "io.containerd.content.v1.content"... type=io.containerd.content.v1

INFO[2024-03-08T23:00:40.174065849+08:00] loading plugin "io.containerd.snapshotter.v1.btrfs"... type=io.containerd.snapshotter.v1

WARN[2024-03-08T23:00:40.174152367+08:00] failed to load plugin io.containerd.snapshotter.v1.btrfs error="path /home/work/docker/containerd/daemon/io.containerd.snapshotter.v1.btrfs must be a btrfs filesystem to be used with the btrfs snapshotter"

INFO[2024-03-08T23:00:40.174169746+08:00] loading plugin "io.containerd.snapshotter.v1.aufs"... type=io.containerd.snapshotter.v1

WARN[2024-03-08T23:00:40.174962226+08:00] failed to load plugin io.containerd.snapshotter.v1.aufs error="modprobe aufs failed: "FATAL: Module aufs not found.\\n": exit status 1"

INFO[2024-03-08T23:00:40.174980036+08:00] loading plugin "io.containerd.snapshotter.v1.native"... type=io.containerd.snapshotter.v1

INFO[2024-03-08T23:00:40.175008476+08:00] loading plugin "io.containerd.snapshotter.v1.overlayfs"... type=io.containerd.snapshotter.v1

INFO[2024-03-08T23:00:40.175072809+08:00] loading plugin "io.containerd.snapshotter.v1.zfs"... type=io.containerd.snapshotter.v1

INFO[2024-03-08T23:00:40.175159018+08:00] skip loading plugin "io.containerd.snapshotter.v1.zfs"... type=io.containerd.snapshotter.v1

INFO[2024-03-08T23:00:40.175172055+08:00] loading plugin "io.containerd.metadata.v1.bolt"... type=io.containerd.metadata.v1

WARN[2024-03-08T23:00:40.175190448+08:00] could not use snapshotter zfs in metadata plugin error="path /home/work/docker/containerd/daemon/io.containerd.snapshotter.v1.zfs must be a zfs filesystem to be used with the zfs snapshotter: skip plugin"

WARN[2024-03-08T23:00:40.175203385+08:00] could not use snapshotter btrfs in metadata plugin error="path /home/work/docker/containerd/daemon/io.containerd.snapshotter.v1.btrfs must be a btrfs filesystem to be used with the btrfs snapshotter"

WARN[2024-03-08T23:00:40.175223826+08:00] could not use snapshotter aufs in metadata plugin error="modprobe aufs failed: "FATAL: Module aufs not found.\\n": exit status 1"

INFO[2024-03-08T23:00:40.175313528+08:00] loading plugin "io.containerd.differ.v1.walking"... type=io.containerd.differ.v1

INFO[2024-03-08T23:00:40.175332445+08:00] loading plugin "io.containerd.gc.v1.scheduler"... type=io.containerd.gc.v1

INFO[2024-03-08T23:00:40.175372046+08:00] loading plugin "io.containerd.service.v1.containers-service"... type=io.containerd.service.v1

INFO[2024-03-08T23:00:40.175391242+08:00] loading plugin "io.containerd.service.v1.content-service"... type=io.containerd.service.v1

INFO[2024-03-08T23:00:40.175407575+08:00] loading plugin "io.containerd.service.v1.diff-service"... type=io.containerd.service.v1

INFO[2024-03-08T23:00:40.175423726+08:00] loading plugin "io.containerd.service.v1.images-service"... type=io.containerd.service.v1

INFO[2024-03-08T23:00:40.175441589+08:00] loading plugin "io.containerd.service.v1.leases-service"... type=io.containerd.service.v1

INFO[2024-03-08T23:00:40.175461802+08:00] loading plugin "io.containerd.service.v1.namespaces-service"... type=io.containerd.service.v1

INFO[2024-03-08T23:00:40.175476520+08:00] loading plugin "io.containerd.service.v1.snapshots-service"... type=io.containerd.service.v1

INFO[2024-03-08T23:00:40.175490884+08:00] loading plugin "io.containerd.runtime.v1.linux"... type=io.containerd.runtime.v1

INFO[2024-03-08T23:00:40.175548440+08:00] loading plugin "io.containerd.runtime.v2.task"... type=io.containerd.runtime.v2

INFO[2024-03-08T23:00:40.175591357+08:00] loading plugin "io.containerd.monitor.v1.cgroups"... type=io.containerd.monitor.v1

INFO[2024-03-08T23:00:40.175967314+08:00] loading plugin "io.containerd.service.v1.tasks-service"... type=io.containerd.service.v1

INFO[2024-03-08T23:00:40.176011538+08:00] loading plugin "io.containerd.internal.v1.restart"... type=io.containerd.internal.v1

INFO[2024-03-08T23:00:40.176053642+08:00] loading plugin "io.containerd.grpc.v1.containers"... type=io.containerd.grpc.v1

INFO[2024-03-08T23:00:40.176073608+08:00] loading plugin "io.containerd.grpc.v1.content"... type=io.containerd.grpc.v1

INFO[2024-03-08T23:00:40.176091713+08:00] loading plugin "io.containerd.grpc.v1.diff"... type=io.containerd.grpc.v1

INFO[2024-03-08T23:00:40.176108131+08:00] loading plugin "io.containerd.grpc.v1.events"... type=io.containerd.grpc.v1

INFO[2024-03-08T23:00:40.176122397+08:00] loading plugin "io.containerd.grpc.v1.healthcheck"... type=io.containerd.grpc.v1

INFO[2024-03-08T23:00:40.176143040+08:00] loading plugin "io.containerd.grpc.v1.images"... type=io.containerd.grpc.v1

INFO[2024-03-08T23:00:40.176159596+08:00] loading plugin "io.containerd.grpc.v1.leases"... type=io.containerd.grpc.v1

INFO[2024-03-08T23:00:40.176177310+08:00] loading plugin "io.containerd.grpc.v1.namespaces"... type=io.containerd.grpc.v1

INFO[2024-03-08T23:00:40.176194658+08:00] loading plugin "io.containerd.internal.v1.opt"... type=io.containerd.internal.v1

INFO[2024-03-08T23:00:40.176231728+08:00] loading plugin "io.containerd.grpc.v1.snapshots"... type=io.containerd.grpc.v1

INFO[2024-03-08T23:00:40.176249303+08:00] loading plugin "io.containerd.grpc.v1.tasks"... type=io.containerd.grpc.v1

INFO[2024-03-08T23:00:40.176267092+08:00] loading plugin "io.containerd.grpc.v1.version"... type=io.containerd.grpc.v1

INFO[2024-03-08T23:00:40.176286304+08:00] loading plugin "io.containerd.grpc.v1.introspection"... type=io.containerd.grpc.v1

INFO[2024-03-08T23:00:40.176464927+08:00] serving... address="/var/run/docker/containerd/containerd-debug.sock"

INFO[2024-03-08T23:00:40.176512710+08:00] serving... address="/var/run/docker/containerd/containerd.sock"

INFO[2024-03-08T23:00:40.176529921+08:00] containerd successfully booted in 0.003226s

DEBU[2024-03-08T23:00:40.182679702+08:00] Started daemon managed containerd

DEBU[2024-03-08T23:00:40.183153810+08:00] Golang's threads limit set to 2041380

INFO[2024-03-08T23:00:40.183375121+08:00] parsed scheme: "unix" module=grpc

INFO[2024-03-08T23:00:40.183385742+08:00] scheme "unix" not registered, fallback to default scheme module=grpc

INFO[2024-03-08T23:00:40.183397827+08:00] ccResolverWrapper: sending update to cc: {[{unix:///var/run/docker/containerd/containerd.sock 0 }] } module=grpc

INFO[2024-03-08T23:00:40.183404786+08:00] ClientConn switching balancer to "pick_first" module=grpc

INFO[2024-03-08T23:00:40.183952917+08:00] parsed scheme: "unix" module=grpc

INFO[2024-03-08T23:00:40.183963831+08:00] scheme "unix" not registered, fallback to default scheme module=grpc

INFO[2024-03-08T23:00:40.183974252+08:00] ccResolverWrapper: sending update to cc: {[{unix:///var/run/docker/containerd/containerd.sock 0 }] } module=grpc

INFO[2024-03-08T23:00:40.183980076+08:00] ClientConn switching balancer to "pick_first" module=grpc

DEBU[2024-03-08T23:00:40.184430093+08:00] Using default logging driver json-file

DEBU[2024-03-08T23:00:40.184471798+08:00] [graphdriver] trying provided driver: overlay2

DEBU[2024-03-08T23:00:40.184837627+08:00] processing event stream module=libcontainerd namespace=plugins.moby

WARN[2024-03-08T23:00:40.189068986+08:00] Using pre-4.0.0 kernel for overlay2, mount failures may require kernel update storage-driver=overlay2

DEBU[2024-03-08T23:00:40.189142857+08:00] backingFs=extfs, projectQuotaSupported=false, indexOff="" storage-driver=overlay2

DEBU[2024-03-08T23:00:40.189160894+08:00] Initialized graph driver overlay2

WARN[2024-03-08T23:00:40.190020804+08:00] Your kernel does not support cgroup memory limit

WARN[2024-03-08T23:00:40.190040856+08:00] Unable to find cpu cgroup in mounts

WARN[2024-03-08T23:00:40.190051280+08:00] Unable to find blkio cgroup in mounts

WARN[2024-03-08T23:00:40.190060943+08:00] Unable to find cpuset cgroup in mounts

WARN[2024-03-08T23:00:40.190071498+08:00] mountpoint for pids not found

DEBU[2024-03-08T23:00:40.190237773+08:00] Cleaning up old mountid : start.

INFO[2024-03-08T23:00:40.190333255+08:00] stopping event stream following graceful shutdown error="context canceled" module=libcontainerd namespace=plugins.moby

INFO[2024-03-08T23:00:40.190377811+08:00] stopping healthcheck following graceful shutdown module=libcontainerd

DEBU[2024-03-08T23:00:40.190466982+08:00] received signal signal=terminated

failed to start daemon: Devices cgroup isn't mounted

如果觉得我的文章对您有用,请点赞。您的支持将鼓励我继续创作!

赞0作者其他文章

评论 0 · 赞 4

评论 0 · 赞 5

评论 0 · 赞 6

评论 0 · 赞 4

评论 0 · 赞 6

添加新评论0 条评论