1同行回答

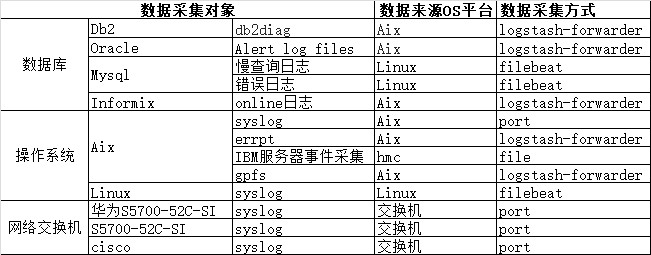

ELK Stack保险业务系统日志管理与分析平台在保险业务系统数据采集对象和采集方式:

Logstash配置完成后就可以进行数据采集了:

1.input {

- file {

- type => "wechat-log"

- path => ["/usr/local/tomcat/logs/wechat/*.log"]

- codec => multiline{

- pattern => "^\[%{TIMESTAMP_ISO8601}\]"

- what => "previous"

- negate => true

- }

- start_position => "beginning"

- }

12.}

13.filter { - grok {

- match => ["message", "\[%{TIMESTAMP_ISO8601:logdate}\] - (?<merchant>[\b\w\s]) - (?<openid>[\u4e00-\u9fa5\b\w\s]) - (?<queryType>[\b\w\s]) - (?<orderId>[\b\w\s]) - (?<wechatOrderId>[\b\w\s]) - (?<input>[\b\w\s]) - (?<source>[\b\w\s]) - %{WORD:level}\s%{JAVACLASS:class}:%{NUMBER:lineNumber} - (?<msg>[\W\w\S\s]*)"]

- }

- date {

- match => ["logdate", "yyyy-MM-dd HH:mm:ss.SSS"]

- target => "@timestamp"

- }

21.}

22.output { - elasticsearch {

- hosts => "192.168.10.100:9200"

- index => "logstash-%{type}"

- template_overwrite => true

- }

28.}

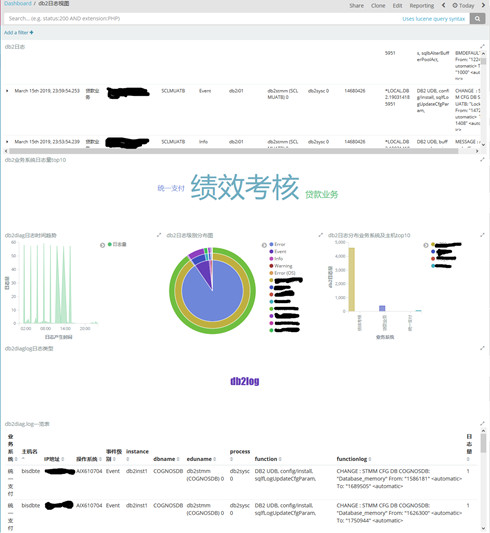

下面对保险业务系统用到最多的Db2数据库和IBM小型机进行数据采集并展现出来。

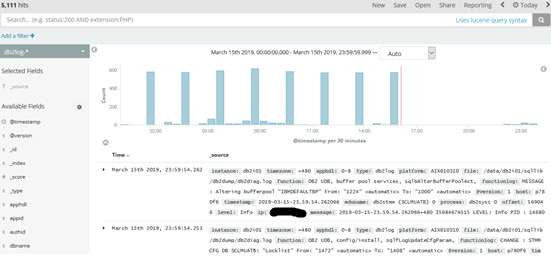

以db2diag日志采集为例,其它日志类型参考db2diag

源端配置logstash-forwarder

{ - "network": {

- "servers": [ "ip:5063"],

- "ssl ca": "/home/sysadmin/certs/keystore.jks"

- },

- "files": [

- {

- "paths": [ " /home/db2inst1/sqllib/db2dump/db2diag.log" ],

添加标签:业务系统,OS平台,ip地址,“type”用于logstash分类

- "fields": {"type": "db2log","env":"贷款业务","platform":"AIX610704","ip":"ipxx"}

- }

- ]

40.}

Server端配置

1.input { - lumberjack {

- port => 5063

- type => "db2log"

- ssl_certificate => "/etc/pki/tls/certs/logstash-forwarder.crt"

- ssl_key => "/etc/pki/tls/private/logstash-forwarder.key"

- codec => multiline {

- charset =>"UTF-8"

- pattern => "^\d{4}-\d{2}-\d{2}-\d{2}\.\d{2}\.\d{2}\.\d{6}[\+-]\d{3}"

- negate => true

- what => previous

- }

- }

14.}

15.filter { - if [type] == "db2log" {

- mutate {

- gsub => ['message', "\n", " "]

- }

- grok {

- match => { "message" =>"(?<timestamp>%{YEAR}-%{MONTHNUM}-%{MONTHDAY}-%{HOUR}\.%{MINUTE}\.%{SECOND})%{INT:timezone}(?:%{SPACE}%{WORD:r

22.ecordid}%{SPACE})(?:LEVEL%{SPACE}:%{SPACE}%{DATA:level}%{SPACE})(?:PID%{SPACE}:%{SPACE}%{INT:processid}%{SPACE})(?:TID%{SPACE}:%{SPACE

23.}%{INT:threadid}%{SPACE})(?:PROC%{SPACE}:%{SPACE}%{DATA:process}%{SPACE})?(?:INSTANCE%{SPACE}:%{SPACE}%{WORD:instance}%{SPACE})?(?:NOD

24.E%{SPACE}:%{SPACE}%{WORD:node}%{SPACE})?(?:DB%{SPACE}:%{SPACE}%{WORD:dbname}%{SPACE})?(?:APPHDL%{SPACE}:%{SPACE}%{NOTSPACE:apphdl}%{SP

25.ACE})?(?:APPID%{SPACE}:%{SPACE}%{NOTSPACE:appid}%{SPACE})?(?:AUTHID%{SPACE}:%{SPACE}%{WORD:authid}%{SPACE})?(?:HOSTNAME%{SPACE}:%{SPAC

26.E}%{HOSTNAME:hostname}%{SPACE})?(?:EDUID%{SPACE}:%{SPACE}%{INT:eduid}%{SPACE})?(?:EDUNAME%{SPACE}:%{SPACE}%{DATA:eduname}%{SPACE})?(?:

27.FUNCTION%{SPACE}:%{SPACE}%{DATA:function}%{SPACE})(?:probe:%{SPACE}%{INT:probe}%{SPACE})%{GREEDYDATA:functionlog}" - }

- }

- date {

- match => [ "timestamp", "YYYY-MM-dd-HH.mm.ss.SSSSSS" ]

- }

33.if "_grokparsefailure" in [tags] {

34.drop {}

35.}

36.} - }

38.output {

39.if [type] == "db2log" { - elasticsearch {

- hosts => ["ip:9200"]

- index => "db2log-%{+YYYY.MM.dd}"

- user => "xxxx"

- password => "xxxx"

- }

- stdout {

- codec => rubydebug

- }

49.}

50.}

Kibana展示

IBM小型机日志采集相关配置:

通过shell脚本crontab定时任务每天集中对hmc事件进行采集汇总,包括以下两步:

1、建立与hmc的信任关系;

2、shell脚本远程定时执行;

Aix errpt日志需要添加字段,并添加至odm库。进行格式化以实现实时日志转发。具体配置如下:

errpt2logstash

Send events from AIX error report (errpt) to a logstash server

install

cp errpt2logstash.pl /usr/local/bin/errpt2logstash.pl

chown root:system /usr/local/bin/errpt2logstash.pl

chmod 750 /usr/local/bin/errpt2logstash.pl

customize the configuration file errpt2logstash.conf

cp errpt2logstash.conf /etc/errpt2logstash.conf

chown root:system /etc/errpt2logstash.conf

chmod 660 /etc/errpt2logstash.conf

odmadd errpt2logstash.add

example logstash input configuration

input {

tcp {

port => 5555

type => errpt

codec => json}

}

example logstash filter configuration

filter {

#

# AIX ERRPT

# define and handle critical messages

#

if [type] == "errpt" {

if [errpt_error_class] == "H" or [facility_label] == "Hardware" {

if [errpt_error_type] == "PERM" or [severity_label] == "Permanent" {

#

# PERM H exclude list

#

# 07A33B6A SC_TAPE_ERR4 PERM H TAPE DRIVE FAILURE

# 4865FA9B TAPE_ERR1 PERM H TAPE OPERATION ERROR

# 68C66836 SC_TAPE_ERR1 PERM H TAPE OPERATION ERROR

# E1D8D4A4 SC_TAPE_ERR2 PERM H TAPE DRIVE FAILURE

# BFE4C025 SCAN_ERROR_CHRP PERM H UNDETERMINED ERROR

#

if [errpt_error_id] not in ["68C66836", "07A33B6A", "E1D8D4A4", "4865FA9B", "BFE4C025"] {

mutate {

add_tag => [ "critical" ]

}

}

}

}

#

# overall include list

#

# 0975DD6C KERNEL_ABEND PERM S KERNEL ABNORMALLY TERMINATED

# 4B97B439 J2_METADATA_CORRUPT UNKN U FILE SYSTEM CORRUPTION

# AE3E3FAD J2_FSCK_INFO INFO O FSCK FOUND ERRORS

# B6DB68E0 J2_FSCK_REQUIRED INFO O FILE SYSTEM RECOVERY REQUIRED

# C4C3339D LGPG_FREED INFO S ONE OR MORE LARGE PAGES HAS BEEN CONVERT

# C5C09FFA PGSP_KILL PERM S SOFTWARE PROGRAM ABNORMALLY TERMINATED

# FE2DEE00 AIXIF_ARP_DUP_ADDR PERM S DUPLICATE IP ADDRESS DETECTED IN THE NET

#

if [errpt_error_id] in ["0975DD6C", "4B97B439", "AE3E3FAD", "B6DB68E0", "C4C3339D", "C5C09FFA", "FE2DEE00"] {

mutate {

add_tag => [ "critical" ]

}

}

#

# Forward

#

if "critical" in [tags] {

throttle {

# max. one alert within five minutes per host and errpt identifier

before_count => -1

after_count => 1

key => "%{logsource}:%{errpt_error_id}"

period => 300

add_tag => [ "throttled" ]

}

if "throttled" not in [tags] {

email {

from => "logstash@server.de"

subject => "CRITICAL: %{logsource} - %{errpt_description}"

to => "admin@server.de"

via => "sendmail"

body => "%{message}"

options => { "location" => "/usr/sbin/sendmail" }

}

}

}}

}

testing

Logstash server

/opt/logstash/bin/logstash agent -e 'input {tcp { port => 5555 codec => json }} output { stdout { codec => rubydebug }}'

AIX server

errlogger "Hello World"

logger -plocal0.crit "Hello World"

deinstall

odmdelete -q 'en_name=errpt2logstash' -o errnotify

rm /usr/local/bin/errpt2logstash.pl

rm /etc/errpt2logstash.conf

ELK Stack保险业务系统日志管理与分析平台结合收集的HMC日志,每天进行小型机的硬件检查;设置不同权限,运维小组根据各自运维板块对日志进行查看分析,进行潜在故障排查;业务运行异常时,根据业务系统进行全局日志查看、分析;对历史日志进行保存,做数据分析;采集用户登录行为及操作记录,进行安全审计;整合应用系统日志,实现全平台的日志收集;结合zabbix对日志进行监控告警。