续集2——在db2 purescale 环境中,模拟 主CF 故障,备CF接管失败?

我根据这个https://blog.csdn.net/qingsong3333/article/details/73701015 在虚拟机重新搭建了一套db2 purecale ,在模拟主 CF宕机后,备CF接管失败。

db2inst1@suse2:~> db2instance -list

ID TYPE STATE HOME_HOST CURRENT_HOST ALERT PARTITION_NUMBER LOGICAL_PORT NETNAME

0 MEMBER STARTED suse2 suse2 NO 0 0 suse2

1 MEMBER STOPPED suse1 suse1 YES 0 0 suse1

128 CF ERROR suse1 suse1 YES - 0 suse1

129 CF PEER suse2 suse2 NO - 0 suse2

HOSTNAME STATE INSTANCE_STOPPED ALERT

suse2 ACTIVE NO NO

suse1 INACTIVE NO YES

There is currently an alert for a member, CF, or host in the data-sharing instance. For more information on the alert, its impact, and how to clear it, run the following command: 'db2cluster -cm -list -alert'.

db2inst1@suse2:~>

db2inst1@suse2:~> db2cluster -cm -list -alert

1.

Alert: Cluster node 'suse1' is not responding and has been placed in the INACTIVE state.

Action: Correct the condition causing this alert by performing the following troubleshooting steps: 1) Verify that the host machine is powered on. 2) Verify the status of the cluster manager by issuing the 'db2cluster -cm -list -host -state' command on the host machine. If the cluster manager is stopped on the host machine, start the cluster manager by issuing the 'db2cluster -cm -start -host <hostname>' command. 3) Verify the connectivity of the host machine. This alert will automatically clear itself when the host is ACTIVE.

Impact: While the host is INACTIVE, the DB2 members on this host will be in restart light mode on other hosts and will be in the WAITING_FOR_FAILBACK state. Any CF defined on the host will not be able to start, and the host will not be available as a target for restart light.

db2inst1@suse2:~>

suse2:/var/adm/ras # tail -f mmfs.log.latest

Fri Jan 4 17:00:24.321 2019: [N] Node 192.168.10.200 (suse1-priv) lease renewal is overdue. Pinging to check if it is alive

Fri Jan 4 17:00:24.322 2019: [I] Lease is overdue. Probing cluster gpfsdomain.suse1-priv

Fri Jan 4 17:00:25.323 2019: [N] Disk lease period expired 0.160 seconds ago in cluster gpfsdomain.suse1-priv. Attempting to reacquire lease.

Fri Jan 4 17:02:24.465 2019: [I] Waiting for challenge 30 (node 1, sequence 25) to be responded during disk election

Fri Jan 4 17:02:48.022 2019: [N] CCR: failed to connect to node 192.168.10.200:1191 (sock 26 err 1143)

Fri Jan 4 17:02:50.010 2019: [N] CCR: failed to connect to node 192.168.10.200:1191 (sock 26 err 79)

Fri Jan 4 17:02:51.020 2019: [N] CCR: failed to connect to node 192.168.10.200:1191 (sock 26 err 1143)

Fri Jan 4 17:03:01.030 2019: [N] CCR: failed to connect to node 192.168.10.200:1191 (sock 26 err 1143)

Fri Jan 4 17:03:02.043 2019: [N] CCR: failed to connect to node 192.168.10.200:1191 (sock 25 err 1143)

Fri Jan 4 17:03:02.542 2019: [N] CCR: failed to connect to node 192.168.10.200:1191 (sock 25 err 79)

Fri Jan 4 17:03:03.553 2019: [N] CCR: failed to connect to node 192.168.10.200:1191 (sock 25 err 1143)

Fri Jan 4 17:03:05.542 2019: [N] CCR: failed to connect to node 192.168.10.200:1191 (sock 25 err 79)

Fri Jan 4 17:03:05.543 2019: [I] This node got elected. Sequence: 31

Fri Jan 4 17:03:05.547 2019: [N] Disk lease reacquired in cluster gpfsdomain.suse1-priv.

Fri Jan 4 17:03:05.548 2019: [D] Election completed. Details: OLLG: 17187965 OLLG delta: 41

Fri Jan 4 17:03:05.549 2019: [E] Node 192.168.10.200 (suse1-priv) is being expelled due to expired lease.

Fri Jan 4 17:03:05.556 2019: [I] Node 192.168.10.201 (suse2-priv) is now the Group Leader.

Fri Jan 4 17:03:05.581 2019: [I] Recovering nodes: 192.168.10.200

Fri Jan 4 17:03:05.593 2019: [N] This node (192.168.10.201 (suse2-priv)) is now Cluster Manager for gpfsdomain.suse1-priv.

Fri Jan 4 17:03:05.660 2019: [N] Node 192.168.10.201 (suse2-priv) appointed as manager for mirlv.

Fri Jan 4 17:03:05.661 2019: [N] Node 192.168.10.201 (suse2-priv) appointed as manager for db2instance.

Fri Jan 4 17:03:06.488 2019: [I] Node 192.168.10.201 (suse2-priv) completed take over for mirlv.

Fri Jan 4 17:03:06.672 2019: [I] Node 192.168.10.201 (suse2-priv) completed take over for db2instance.

Fri Jan 4 17:03:06.673 2019: [I] Recovered 1 nodes for file system db2instance.

附件有:

主机配置信息.txt,包含db2 purescale等配置参数

suse2:/var/ct/db2domain_20181223121052/log/mc/IBM.RecoveryRM 目录下的日志:

trace.6.sp.txt

trace.7.sp.txt

trace_pub.1.sp.txt

trace_pub.4.sp.txt

suse2:/var/ct/db2domain_20181223121052/log/mc/IBM.GblResRM 目录下的日志:

trace.8.sp.txt

trace_event.1.sp.txt

/home/db2inst1/sqllib/db2dump/DIAG0000 目录下的日志:

db2diag.txt

db2inst1.nfy.txt

麻烦大家帮忙分析分析原因

附件:

![]() 主机配置信息.txt (20.35 KB)

主机配置信息.txt (20.35 KB)

![]() trace.6.sp.txt (1.18 MB)

trace.6.sp.txt (1.18 MB)

![]() trace.7.sp.txt (70.14 KB)

trace.7.sp.txt (70.14 KB)

![]() trace_pub.1.sp.txt (417.36 KB)

trace_pub.1.sp.txt (417.36 KB)

![]() trace_pub.4.sp.txt (523.03 KB)

trace_pub.4.sp.txt (523.03 KB)

![]() trace.8.sp.txt (1.52 KB)

trace.8.sp.txt (1.52 KB)

![]() trace_event.1.sp.txt (1.52 KB)

trace_event.1.sp.txt (1.52 KB)

![]() db2diag.txt (2.55 MB)

db2diag.txt (2.55 MB)

![]() db2inst1.nfy.txt (4.82 KB)

db2inst1.nfy.txt (4.82 KB)

2同行回答

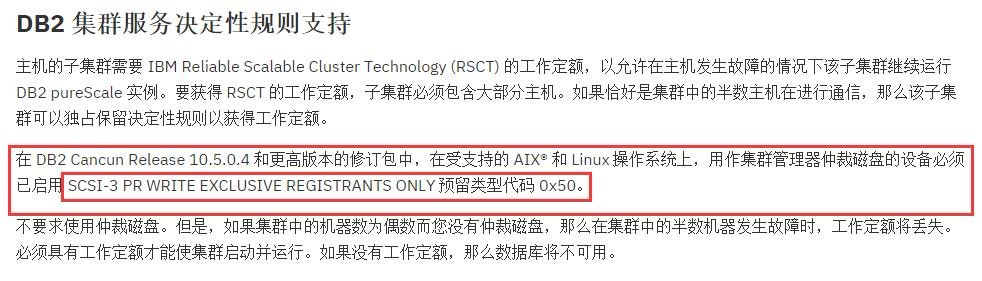

安装配置完后执行:

suse1:~ # db2cluster -cm -set -tiebreaker -disk /dev/sdb (备注:命令显示执行成功了,/dev/sdb是共享磁盘,不支持scsi-3 PR)

执行成功后查看:

suse1:~ # db2cluster -cm -list -tiebreaker

The current quorum device is of type Disk with the following specifics: /dev/sdb

现在检查查看的状态:

cluster的仲裁:

suse1:~ # db2cluster -cm -list -tiebreaker

The current quorum device is of type Operator. (这里没有显示仲裁磁盘了)

suse1:~ # db2cluster -cfs -list -tiebreaker

The current quorum device is of type Disk with the following specifics: /dev/sdd.

利用公司资源,寻求IBM原厂技术支持,他们给出的答复是仲裁磁盘必须要支持scsi-3。

后来通过starwind软件模拟支持scsi-3的磁盘,在系统上认出/dev/sdi

suse1:~ # sg_persist -c /dev/sdb

VMware, VMware Virtual S 1.0

Peripheral device type: disk

persistent reservation in: transport: Host_status=0x10 [DID_TARGET_FAILURE]

Driver_status=0x08 [DRIVER_SENSE, SUGGEST_OK]

PR in (Report capabilities): command not supported (不支持scsi-3 PR)

suse1:~ # sg_persist -c /dev/sdi

ROCKET IMAGEFILE 0001

Peripheral device type: disk

persistent reservation in: transport: Host_status=0x10 [DID_TARGET_FAILURE]

Driver_status=0x08 [DRIVER_SENSE, SUGGEST_OK]

PR in (Report capabilities): bad field in cdb or parameter list (perhaps unsupported service action) (支持scsi-3 pr)

重新设置仲裁磁盘

suse1:~ # db2cluster -cm -set -tiebreaker -disk /dev/sdi

Configuring quorum device for domain 'db2domain_20181223121052' ...

Configuring quorum device for domain 'db2domain_20181223121052' was successful.

suse1:~ #

模拟主CF宕机,备CF接管成功

备注:破解版starwind软件下载地址 https://www.jb51.net/softs/563810.html